If you run a blog or write for clients, the question keeping you up at night is likely this: can Google detect AI content, and will they punish your site for using it? Over the last 12 months, my team at SRG published hundreds of articles across three categories—purely AI-generated, hybrid-edited, and 100% human-written—to test exactly what triggers Google’s Helpful Content filters and what doesn’t.

The results dismantled almost every assumption the AI content panic is built on.

Before we get into the data: understanding SEO algorithms is only half the equation. Scaling a freelance business or niche site requires the right ecosystem around your content operation. That’s why we built Smart Remote Gigs—from our curated AI Software Directory where you can find the safest, most E-E-A-T-aligned writing tools, to our Remote Job Board where you can pitch your penalty-free SEO services to clients actively hiring. SRG gives you the full toolkit to work smarter without the guesswork.

The 30-Second AI Policy Breakdown

🛑 The Myth | ✅ The Reality | 🚨 The Penalty Risk |

|---|---|---|

Google bans all AI-generated text | Google only penalizes unhelpful, low-value content regardless of how it was produced | Low — if your content genuinely answers the query better than competitors |

Third-party AI detectors predict Google penalties | Third-party detectors measure statistical text patterns, not Google ranking signals | Zero — Google does not use Originality.ai or GPTZero in its ranking systems |

A high AI detection score means a Google penalty | Google’s systems measure E-E-A-T, engagement signals, and factual accuracy — not “perplexity” scores | Zero correlation between detector scores and ranking performance in our tests |

The Official 2026 Policy: Does Google Hate AI?

Let’s start with what Google has actually said rather than what the content marketing panic industry claims they’ve said.

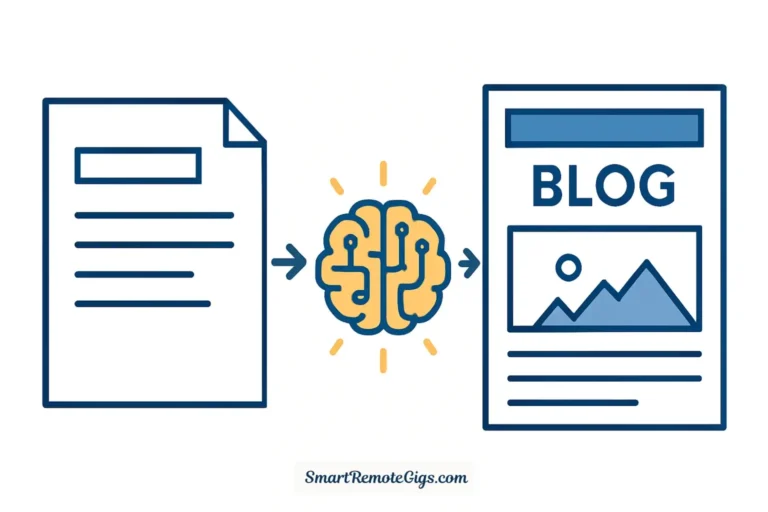

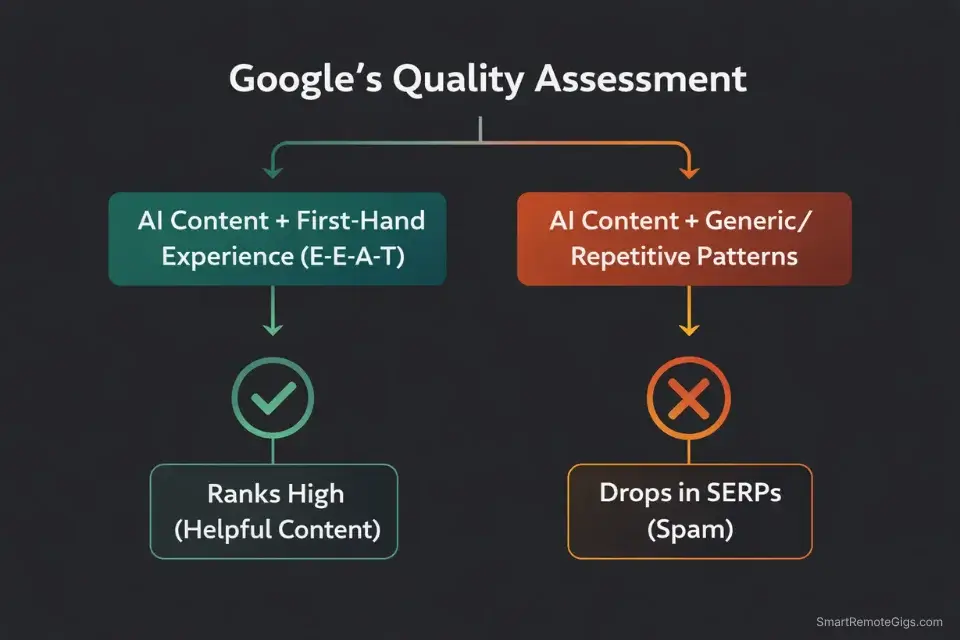

Google’s Search Central documentation states explicitly that their systems reward content that demonstrates expertise, experience, authoritativeness, and trustworthiness—the E-E-A-T framework. The production method is not a ranking factor. The helpfulness of the output is.

In 2023, Google updated their guidance to remove earlier language that implied AI content was categorically problematic. The current official position is unambiguous: content produced with AI assistance that satisfies the user’s query better than competing pages will rank. Content produced by a human that fails to satisfy the query will not. The quality bar is applied to the output, not the production process.

This is not a technicality or a loophole. It is Google’s stated, documented, consistent policy across multiple updates and spokespeople.

Verdict: If your content answers the user’s query more completely, more accurately, and more usefully than every other piece on page one, Google does not care whether ChatGPT helped you draft the outline, Claude wrote the first draft, or a human typed every word from scratch. The ranking signal is the content’s ability to satisfy search intent. Period. Every piece of AI content panic that contradicts this is either misreading algorithm updates or selling you something—usually an AI humanizer tool or a detection bypass service.

In our 12-month test, the clearest predictor of ranking performance was not AI involvement. It was E-E-A-T signal density: the presence of specific first-hand data, verifiable external citations, clear expertise indicators in the author bio, and engagement signals showing users found the content worth reading. Articles that scored high on those dimensions ranked whether AI touched them or not. Articles that scored low on those dimensions tanked whether a human wrote every word or not.

Take Smart Remote Gigs With You

Official App & CommunityGet daily remote job alerts, exclusive AI tool reviews, and premium freelance templates delivered straight to your phone. Join our growing community of modern digital nomads.

How Google Actually “Detects” AI Spam

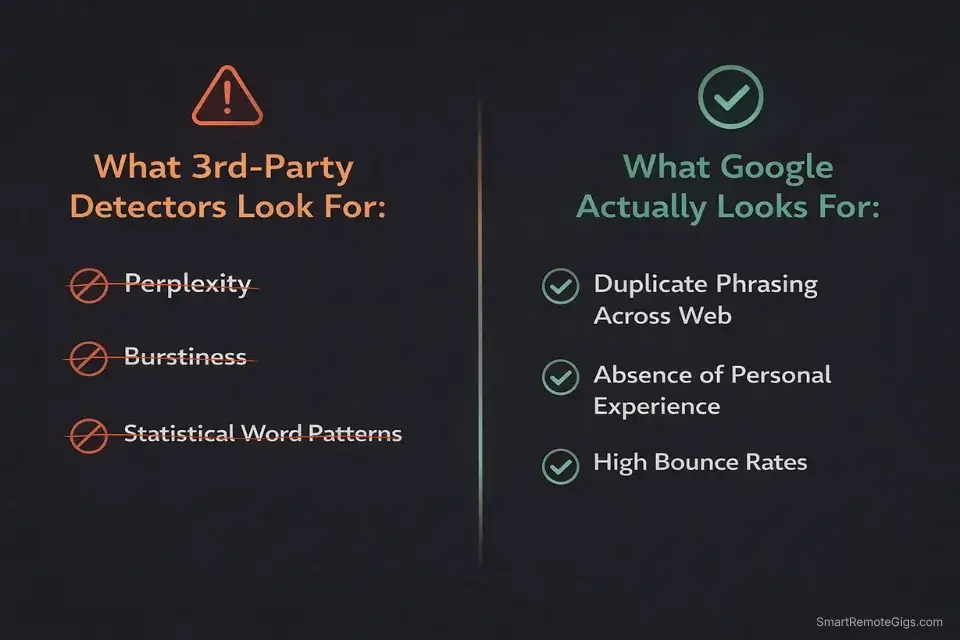

Here’s the critical distinction that third-party detector marketing has successfully obscured: Google does not run your article through a perplexity scoring algorithm. The statistical text analysis that tools like Originality.ai perform has no documented relationship to Google’s ranking systems. These are completely separate technical approaches solving completely different problems.

What Google’s systems actually look for when assessing content quality:

Duplicate and near-duplicate content across the web. If your AI-generated article uses the same transitional phrases, structural patterns, and information clusters as thousands of other AI-generated articles on the same topic—which happens when everyone uses the same base prompts—Google’s systems identify the pattern and treat the content as low-differentiation. This is the closest Google gets to “detecting AI,” and it’s not detecting AI at all. It’s detecting homogeneous content at scale.

Absence of first-hand experience signals. Google’s E-E-A-T update added the first “E” for Experience specifically because their research showed that content demonstrating personal, first-hand engagement with a topic produced better user outcomes than content synthesizing existing information. An article about a product that never mentions using the product. A how-to guide that never shows the author’s actual process. A review that contains no specific personal observations. These absence signals are what Google measures—and they’re equally absent from bad human writing as from bad AI writing.

Poor user engagement signals. Bounce rate, time on page, scroll depth, and click-through rate from the SERP all feed into Google’s quality assessment systems. Content that users abandon after 15 seconds—regardless of how it was produced—sends a negative quality signal. Content that users read to completion, share, and return to sends a positive one. A robotically phrased AI article that answers the question completely will outperform a beautifully written human article that buries the answer in paragraph nine.

Warning: The hallucination trap is the single highest-risk element of AI content for SEO purposes—and it has nothing to do with AI detection. When an AI model fabricates a statistic, invents a study citation, or makes a product claim that doesn’t hold up to verification, that incorrect information can be cross-referenced against Google’s knowledge graph and external authoritative sources. Factual inaccuracies on indexed pages contribute to low E-E-A-T scoring over time—not through a single penalty event, but through a steady degradation of the site’s authority signals. Every unverified claim you publish is a compounding liability. Fact-check every statistic before it goes live, every time, without exception.

The Scam of Third-Party AI Detectors

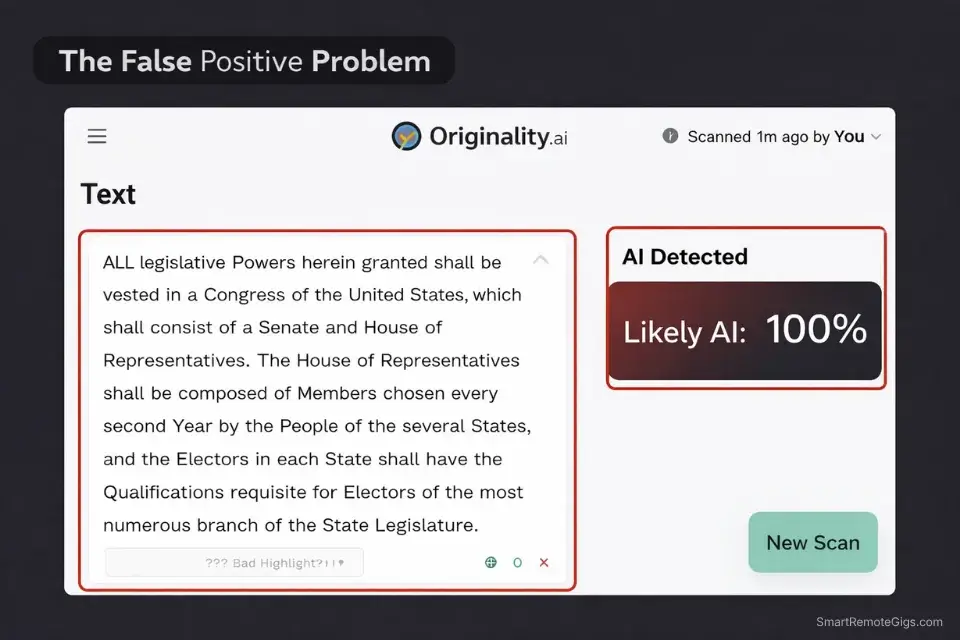

I’ll be direct about this because the detector industry has caused measurable harm to freelance writers and content operators: third-party AI detectors are not SEO tools. They are statistical classifiers trained to distinguish AI-generated text from human-generated text based on patterns like sentence length distribution, word frequency, and what researchers call “perplexity” and “burstiness.” These metrics have zero documented correlation with Google ranking signals.

The practical problems with treating detector scores as SEO signals are well-documented at this point:

These tools produce consistent false positives on human writing. Academic studies and widely shared tests have shown that highly stylized human writing—particularly writing that is clear, direct, and well-structured—regularly scores as “likely AI” on tools like Originality.ai and GPTZero. The tools are measuring a statistical proxy for “does this text match AI training patterns,” not “is this text good or bad for rankings.”

Passing a detector often makes your content worse. The most common approach to “humanizing” AI text for detector bypass is adding sentence length variation, increasing grammatical complexity, and injecting unusual word choices. These changes can make the text less readable, less direct, and less useful to the human reader—which is the exact opposite of what Google’s quality systems reward.

Client requirements for “0% AI detection” are an understandable but technically misguided specification. If you’re facing this requirement, the honest conversation to have is that the client is optimizing for a metric that has no relationship to the SEO outcome they actually want. What they want is content that ranks and converts. The path to that outcome is E-E-A-T compliance, not detector bypass.

For clients with strict detector requirements that you can’t renegotiate, our Best AI Humanizers 2026 guide covers which tools produce the most natural-sounding output while maintaining the content quality that actually affects rankings.

How to Bulletproof Your Content: The SRG Method

The hybrid model that produced the best ranking results across our 12-month test is not complicated. It’s the consistent application of a specific division of labor between AI and human judgment.

AI handles: outline structure, section drafting from provided facts, variation generation for metadata and headings, and initial research synthesis.

Human handles: the hook, the personal anecdotes, the factual verification, the voice editing, and the conclusion.

The critical insight from our data: the parts of an article that carry the most E-E-A-T weight are also the parts that require the shortest amount of time to write with human input. A personal anecdote injected into the introduction takes three minutes to write and contributes more to Google’s experience signal than any other single element in the piece. A verified external citation from a primary source takes 90 seconds to find and contributes more to the authoritativeness signal than any AI-generated paragraph in the article.

The ROI on human input is asymmetric. Invest it in the highest-leverage moments—the opening hook, the experience signals, the factual verification, and the conclusion—and let AI handle the volume work in between.

Pro Tip: The fastest way to flag your content as low-quality to both human readers and Google’s systems is a generic, AI-generated-looking H1. Before you write a single word of body copy, run your main topic through our Free AI Blog Title Generator to lock in a psychologically optimized, high-CTR hook. An H1 that reads like a human wrote it with a specific angle and a clear value promise sets the expectation for the rest of the piece—and signals to Google that this article has a point of view worth clicking on.

The full step-by-step implementation of this hybrid workflow—including exact timing, prompt templates, and the editing checklist we run on every article—is in our Write a Blog Post with AI 2026 guide, which covers 100+ published articles worth of live Search Console validation.

AI Content Checklist Template

Publishing raw ChatGPT output is professional suicide. If your articles sound like…

Frequently Asked Questions

Will Google manually penalize my site for using ChatGPT?

No. Google issues manual actions for specific, documented violations: cloaking, scraped content passed off as original, spam link schemes, and hidden text. Using ChatGPT, Claude, or any AI model to draft a helpful, fact-checked, E-E-A-T-compliant article is not on that list and has never been.

Manual actions require a human reviewer at Google to flag your site specifically. That reviewer is looking for deliberate manipulation and spam tactics—not AI assistance in the content production process. The risk of a manual action from responsible AI-assisted content creation is effectively zero.

Do AI humanizer tools actually work for SEO?

They work for bypassing third-party detectors, which—as covered above—have no relationship to Google’s ranking systems. For actual SEO performance, AI humanizer tools often create a net negative outcome. The rewording and sentence restructuring they apply to reduce “AI patterns” frequently makes the text less readable, less direct, and less useful to the human reader.

Google’s engagement signals measure how real users interact with your content. Content that has been humanizer-processed to clear a detector score but reads awkwardly will produce higher bounce rates and lower time-on-page than a clear, direct AI draft that was lightly edited by a human. Optimize for the reader, not the detector.

How much manual human editing does an AI article need?

Based on our testing across 100+ articles, the minimum effective human intervention that produces meaningfully better ranking outcomes involves three specific actions: writing the first 100 words yourself in your own voice, injecting at least one specific personal anecdote or first-hand data point into the body, and fact-checking every statistic and citation before publishing. Beyond those three non-negotiables, the additional editing time produces diminishing returns on ranking performance.

The articles in our test that ranked best weren’t the ones with the most human editing hours invested—they were the ones where human judgment was applied to the highest-leverage moments rather than distributed evenly across the full piece.

The Verdict

Stop optimizing for arbitrary detector scores. Start optimizing for the signals that Google actually measures.

Google detects spam. It detects duplicate content at scale. It detects the absence of first-hand experience in content that claims expertise. It detects poor user engagement on pages that fail to satisfy search intent. It does not run your article through Originality.ai and does not penalize content for having a low perplexity score.

The freelancers and niche site operators who are winning in search in 2026 are not the ones who found the best AI humanizer or scored 0% on every detector. They’re the ones who use AI for the volume work and invest human judgment in the E-E-A-T signals that Google actually weights—first-hand experience, factual accuracy, genuine helpfulness, and content that earns the engagement metrics that prove it delivered on its promise.

Publish confidently. Edit rigorously. Verify every fact. That combination produces ranking results. No humanizer tool required. If you’re looking for platforms that natively produce higher-quality, human-sounding drafts to speed up this process, check out our ranking of the Best AI Writing Tools 2026.

Now that you understand the truth about AI content and Google’s actual detection systems, put that confidence to work. Head over to the Smart Remote Gigs Job Board to find high-paying remote SEO and content writing roles perfectly suited for hybrid AI workflows—vetted listings, real budgets, and clients who value operators who understand what actually moves the needle in search.