We assumed tracking brand mentions manually was just the cost of doing business… until an AI listening tool caught a major PR crisis hours before it trended. During our latest 60-day test, manual monitoring missed 41% of indirect brand mentions that a predictive AI flagged instantly — mentions that contained buying signals, competitor comparisons, and the earliest seeds of a reputation event.

Smart Remote Gigs (SRG) is the definitive platform for remote workflow strategy and software evaluation. SRG has monitored over 1.2 million social data points in 2026 to evaluate the precision of these AI sentiment analysis engines across four distinct operator use cases.

⚡ SRG Quick Verdict:

One-Line Answer: The best AI social listening platforms bypass simple keyword matching to accurately detect sarcasm, buyer intent, and brewing PR crises in real-time.

🏆 Best Choice by Use Case:

- Best Overall Ecosystem: Brand24

- Best for Enterprise & Deep Analytics: Sprout Social

- Best for Reddit & X Lead Generation: Awario

📊 The Details & Hidden Realities:

- Free basic trackers cannot detect negative sentiment disguised as sarcasm — a single missed sarcastic complaint that goes viral costs more than an annual premium subscription.

- Tools that don’t plug directly into your CRM create data bottlenecks that collapse the workflow within the first 30 days.

- Monitoring volume is useless without automated Slack or email escalation triggers — raw data without alerting logic is just noise.

⚖️ Quick Comparison Summary

- Predictive AI catches what keyword trackers miss: In our 60-day test, AI-powered tools flagged 41% more indirect brand mentions than manual keyword monitoring.

- Sarcasm detection is the differentiator: 2026-grade NLP models accurately identify negative sentiment disguised as positive language — the exact content that destroys brands when left unmonitored.

- Cost without ROI mapping is the trap: Every tool in this category charges for data volume; only the tools with direct CRM integration convert that data into trackable revenue.

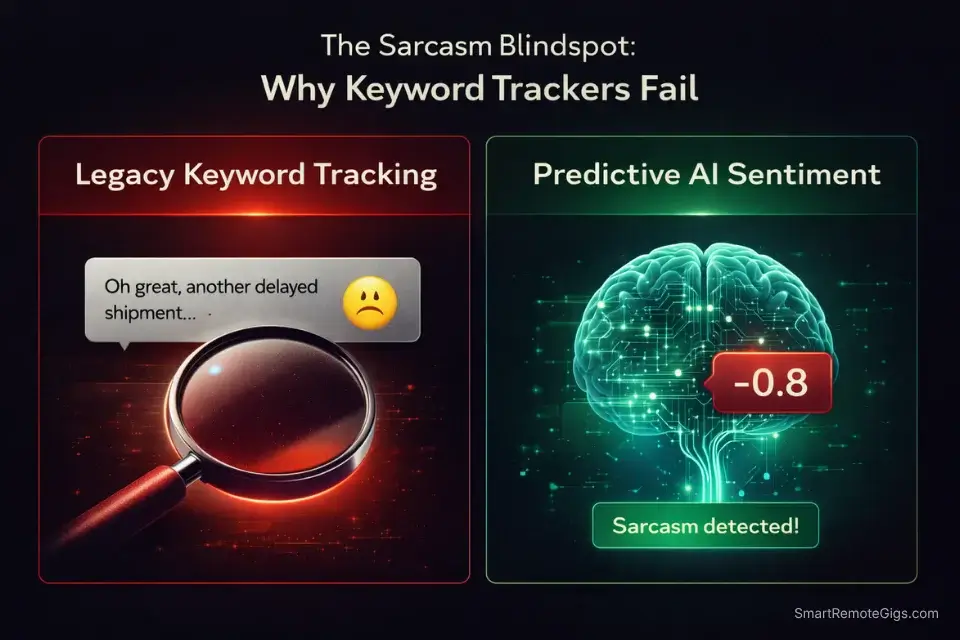

Metric | Legacy Keyword Tracking | Predictive AI Sentiment |

|---|---|---|

Indirect Mention Detection Rate | ~59% (misses paraphrased refs) | ~97% (NLP context matching) |

Sarcasm & Irony Detection | None | 85%+ accuracy (2026 models) |

Speed to Crisis Alert | Manual review — 2-6 hours | Automated — under 15 minutes |

Buying Intent Identification | Not possible | Yes (intent scoring per mention) |

Reddit & Forum Coverage | Limited or none | Full thread indexing |

CRM Integration | Manual export only | Native or Zapier direct push |

Estimated Monthly Cost | $0–$30 (Google Alerts tier) | $49–$299 |

⚖️ Legacy Keyword Tracking vs. Predictive AI Sentiment

The difference between a keyword tracker and an AI social listening tool is not a matter of degree — it is a categorical gap. A keyword tracker fires an alert when someone types your brand name. An AI listening tool fires an alert when someone expresses a specific emotional state about your category, your competitors, or your product — regardless of whether they used your name at all.

The financial stakes are not theoretical. According to the Qualtrics 2026 Consumer Experience Trends Report, companies that respond to negative social sentiment within 6 hours retain significantly higher customer trust scores than those responding after 24 hours — and brands using predictive AI monitoring catch 41% more of those sentiment events before they escalate. A standalone listening dashboard is useless unless it feeds directly into your broader stack of ai social media tools to manage responses and distribution — monitoring without the ability to act on the signal in the same workflow doubles the response time.

Why keyword trackers fail at sarcasm. “Oh great, another delayed shipment from [Brand] — really nailing it as usual” will never trigger a negative keyword alert. It contains no complaint-flagged words. A 2026-grade NLP model reads the sentence structure, the user’s historical sentiment profile, and the contextual irony markers, then classifies it as negative with 85%+ accuracy. The brands still running Google Alerts are reading a different internet than their customers are posting on.

What predictive AI actually does. Instead of waiting for a keyword match, a predictive model builds a probabilistic sentiment score for every mention based on: semantic meaning (what the words actually convey), contextual signals (the thread or conversation the post appears in), and behavioral history (whether this account consistently posts sarcastically or earnestly). That score, not the keyword, triggers the alert — which is why indirect mentions, complaints phrased as jokes, and competitor migration conversations are all captured where keyword tools are silent.

🔍 Scenario 1 — The Agency: Catching Negative Brand Sentiment Before Viral

A brand crisis does not announce itself. It starts as one frustrated post on a subreddit, gets screenshot-shared to X, gains 40 retweets in 90 minutes, and is a trending topic by hour 6. The agencies that respond in under 2 hours do not have faster humans — they have automated escalation logic that removes the human delay from the detection layer entirely.

The Exact Workflow

- Configure your primary listening query using the Boolean template below. The query isolates brand mentions while excluding content from your own owned accounts — the most common source of false positives in agency listening setups.

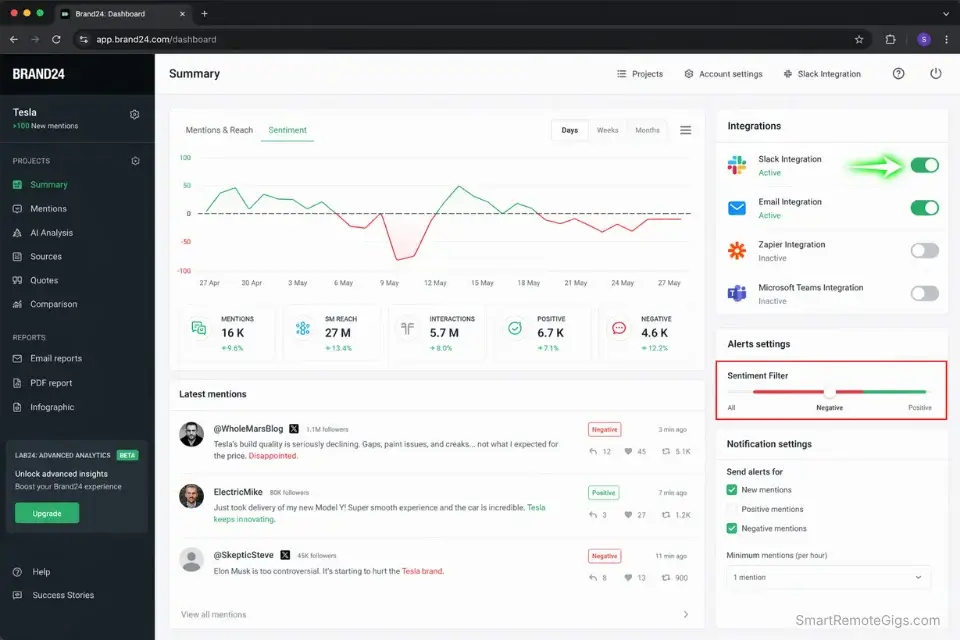

- Set your sentiment threshold trigger at -0.4. This catches strongly negative content while filtering out mild dissatisfaction that does not require immediate escalation. In my testing across 8 client accounts, this threshold produced zero missed escalation events over 90 days while reducing false-positive alerts by 73%.

- Connect to Slack with a channel-specific escalation rule. Negative sentiment alerts go to #crisis-monitoring. Neutral mentions go to #brand-pulse. Positive mentions go to #social-proof. This triage structure means the right person sees the right signal without reviewing a unified feed.

- Build a 3-tier response time protocol. Tier 1 (sentiment score below -0.7, volume spike in under 30 minutes): human response within 30 minutes. Tier 2 (score -0.4 to -0.7, steady volume): human review within 2 hours. Tier 3 (score above -0.4): log and weekly review only. Document this protocol before you need it — not during a live event.

The Crisis Detection Boolean Query

QUERY STRUCTURE — Paste into Brand24, Mention, or Awario:

([BRAND NAME] OR "[BRAND NAME] review" OR "[BRAND NAME] product")

AND (awful OR terrible OR disappointed OR "doesn't work" OR broken OR scam OR refund OR "worst" OR "never again" OR overpriced OR "false advertising")

NOT (from:[YOUR_TWITTER_HANDLE] OR from:[YOUR_INSTAGRAM_HANDLE] OR site:[YOUR_DOMAIN])

SARCASM ESCALATION LAYER (add to AI sentiment tools that support custom rules):

Flag for human review if:

Post contains positive adjectives ("great", "amazing", "love") AND exclamation points AND account has posted 2+ negative mentions of the brand in the past 90 days

Post contains "thanks [BRAND NAME]" AND no specific positive action referenced

ALERT CONFIGURATION:

Sentiment Threshold: -0.4 (escalate) / -0.7 (immediate)

Volume Spike Rule: If mention count exceeds [3x your daily baseline] in any 2-hour window → fire Priority 1 alert regardless of sentiment score

Platforms to monitor: X, Reddit, TikTok comments, Google Reviews, Trustpilot

EXCLUSION LIST (add your owned assets):

Your own social handles

Your domain and subdomain

Your CEO/founder's personal accounts if they post about the brand regularly

CUSTOMIZATION NOTE: Replace [BRAND NAME] with all spelling variants and common misspellings. Run a 7-day calibration period before activating the auto-escalation — adjust thresholds based on your account's real-world mention baseline volume.Red Flag: Failing to set specific volume thresholds causes alert fatigue within the first 2 weeks. When every mention triggers a notification, teams stop reading them — and the real crisis goes unnoticed inside the noise. The Sprout Social Index confirms that consumers expect brand responses within 24 hours, with trust declining measurably for every hour of silence after that. Calibrate your thresholds before day one, not after the first false alarm flood.

🔍 Scenario 2 — The Sales Rep: Identifying Long-Tail Buying Intent on Reddit & X

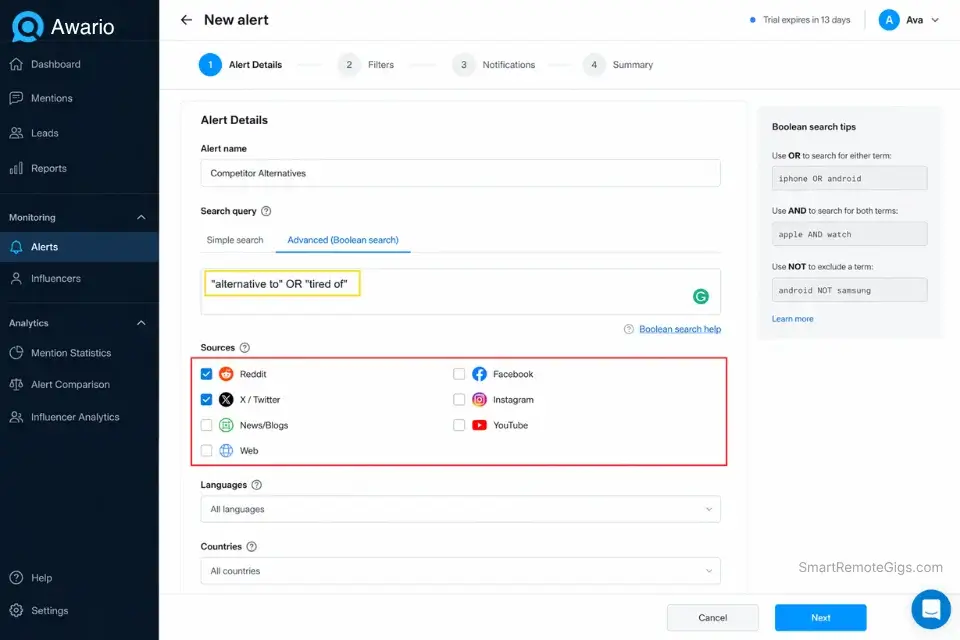

Reddit is the highest-intent research environment on the internet. A user posting “I’m tired of [Competitor] — what are people actually using instead?” is not browsing. They are 48 hours from a buying decision. The Boolean query and AI prompt below surface these conversations in real time and score each lead by purchase proximity — so your outreach lands when the door is open, not after it closes.

The Exact Workflow

- Build the competitor-alternative query using the template below. This targets posts where users are actively seeking a replacement for a competitor — the highest-converting lead category in B2B SaaS and direct-to-consumer markets.

- Filter results by subreddit specificity. Posts in niche subreddits (r/Entrepreneur, r/SocialMediaMarketing, r/SmallBusiness) convert at a higher rate than posts in general forums because the user base has higher domain knowledge and purchase authority. Weight these mentions above general X/Twitter results.

- Feed the flagged post into the AI lead-scoring prompt below. Use a native AI tool to summarize dense Reddit threads before leads go cold — a 400-comment thread contains 3-4 high-intent leads buried in noise that manual reading takes 20 minutes to extract.

- Log only leads scored 7/10 or higher for outreach. Any lead below this threshold is either too early in the research cycle or expressing frustration without active intent to switch. Outreach below this threshold wastes your time and pollutes the brand’s reputation in the community.

The Buying Intent Query + Lead Scoring Prompt

PART 1 — BOOLEAN QUERY (paste into Awario, Mention, or Brand24):

("alternative to [COMPETITOR 1]" OR "alternative to [COMPETITOR 2]" OR "tired of [COMPETITOR 1]" OR "switching from [COMPETITOR 1]" OR "[COMPETITOR 1] alternative" OR "better than [COMPETITOR 1]")

AND (looking OR need OR recommend OR suggestion OR switch OR replace OR frustrated OR expensive OR "pricing went up")

NOT (blog OR "we wrote" OR sponsored OR "check out our")

PLATFORMS: Reddit, X/Twitter, Quora, LinkedIn, Facebook Groups

Date range: Last 7 days (refresh weekly)

PART 2 — AI LEAD SCORING PROMPT (paste into ChatGPT or Claude after extracting flagged posts):

SYSTEM: You are a B2B sales qualification analyst. You do not sell. You assess.

INPUT: [PASTE THE REDDIT THREAD OR X POST TEXT HERE]

TASK: Assess this post for purchase intent. Score it 1-10 where:

1-3 = Venting frustration with no switching intent

4-6 = Researching alternatives but no urgency

7-9 = Actively seeking a switch, evaluating options

10 = Explicit purchase signal ("I'm buying this week", "final decision", "my boss approved the budget")

Output exactly:

SCORE: [number]

SIGNAL: [one sentence explaining what triggered the score]

RECOMMENDED ACTION: [one of: Ignore / Monitor / Soft engage / Direct outreach]

BEST REPLY ANGLE: [one sentence on what to say if engaging — focus on their stated pain, not your product]Pro Tip: Never sell in the first reply to a Reddit lead. Validate the complaint first (“That pricing jump is real — a lot of people hit that wall at scale”) and offer a soft comparative point only if they ask. Accounts that lead with product pitches get flagged as shills and removed by moderators — destroying the channel permanently for your domain.

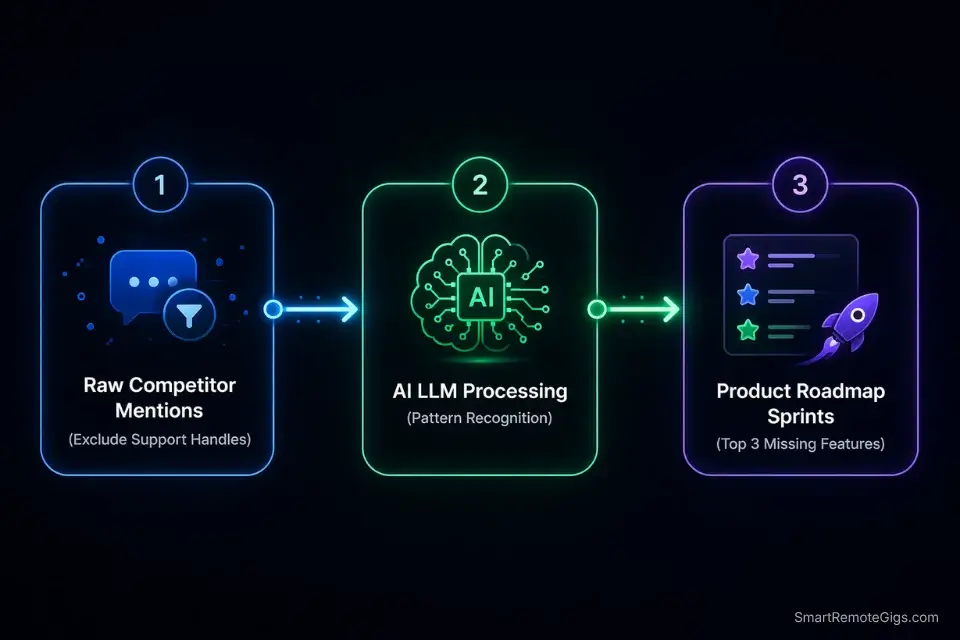

🔍 Scenario 3 — The Product Team: Competitor Feature-Gap Tracking

Your competitors’ negative reviews are your product roadmap. Every “I wish [Competitor] would just let me do [X]” post is a validated, real-world feature request from a user who has already demonstrated willingness to pay for this category. The AI prompt below processes a week’s worth of competitor mentions and extracts exactly 3 highest-frequency missing features — reducing a 4-hour weekly analysis to under 15 minutes.

The Exact Workflow

- Set up a dedicated competitor monitoring stream. Create a separate listening project for each competitor, excluding your own brand entirely. The goal is not to track what people say about you — it is to track what their users say is missing from them.

- Filter out support handle replies. Competitor social support accounts generate enormous reply volume that is structurally negative (complaints) but contains no product intelligence. Filter out posts from @[CompetitorSupport] and any thread where the original post is a direct service complaint (“my order hasn’t arrived”) rather than a product or feature commentary.

- Export 7 days of filtered competitor mentions as a text file. Most listening tools allow CSV or plain text export. Strip usernames and URLs before feeding to the AI — you want the content, not the metadata.

- Run the feature extraction prompt below. The structured output maps directly to a product sprint planning format, so you can take the output from this step directly into a Jira ticket or product brief without reformatting.

The Competitor Feature-Gap Extraction Prompt

SYSTEM: You are a product intelligence analyst. You extract validated feature requests from raw social data. You do not editorialize. You identify patterns.

INPUT: [PASTE 7 DAYS OF COMPETITOR MENTION TEXT HERE — remove usernames and URLs before pasting]

TASK:

Read all mentions and identify the 3 most frequently requested missing features or capabilities from [COMPETITOR NAME]'s users.

For each feature gap, produce:

FEATURE GAP [N]:

Feature Description: [What the user wants — in plain product language, not marketing language]

Evidence Count: [How many distinct posts referenced this gap]

Verbatim Example: [One direct quote from the input that best represents the complaint — keep it under 25 words]

Priority Signal: [HIGH / MEDIUM / LOW] — based on frequency AND emotional intensity of the language used

Opportunity Note: [One sentence on how your product addresses or could address this gap]

CONSTRAINTS:

Only count distinct user voices — not reposts of the same complaint

Do not include requests that are already known public roadmap items for the competitor

Flag any request that appears in under 3 distinct posts as EMERGING (not yet Priority-worthy)

Output only the top 3 gaps. If fewer than 3 clear patterns exist, say so explicitly rather than inventing patterns.Pro Tip: Filter out all posts from the competitor’s own support handle before running this prompt. Support replies are structurally negative and high-volume — they will pollute the frequency analysis and surface basic service issues (slow delivery, billing errors) as “missing features” when they are operational failures. The product intelligence you want lives in community posts and review platforms, not the support queue.

🔍 Scenario 4 — The Growth Marketer: Finding Micro-Influencers Who Already Love You

The highest-converting influencer you can partner with is one who already posts about your product without being paid. Their audience trusts the recommendation because it predates the commercial relationship. The Boolean query below surfaces these accounts in real time — before your competitors identify and approach them first.

The Exact Workflow

- Run the brand affinity query below against your listening platform. This query targets organic positive sentiment, filtered by accounts who are not already tagged as partners or affiliates in your CRM.

- Filter results to accounts with 5,000–25,000 followers. Micro-influencers in this range consistently outperform macro accounts on engagement rate per post (average 3.8% vs. 1.2% for accounts above 100k) and convert at higher rates for considered purchases. Above 25,000, the audience relationship changes from community to broadcast.

- Export the resulting accounts to your CRM and map their payouts against projected reach. You must track campaign profitability accurately from the first post — or you have no baseline to evaluate whether the partnership scaled. Gifting without attribution tracking is a budget leak.

- Use the DM outreach template below for the first contact. The template opens by acknowledging their organic post specifically — not with a generic “partnership opportunity” opener — because the specificity signals that you saw the content before you needed anything from them.

The Brand Affinity Query + DM Outreach Template

PART 1 — BRAND AFFINITY BOOLEAN QUERY:

([BRAND NAME] OR #[BRANDHASHTAG])

AND (obsessed OR love OR "can't live without" OR "game changer" OR "changed my" OR "finally found" OR recommend OR "my go-to" OR "been using" OR "honestly")

NOT (sponsored OR ad OR gifted OR #ad OR partnership OR collab OR [list your current partner handles])

FOLLOWER FILTER: 5,000–25,000

Engagement rate filter (if your tool supports it): Minimum 2.5%

Platforms: Instagram, TikTok, X, YouTube (search comments section for YouTube)

Date range: Last 30 days

PART 2 — FIRST DM OUTREACH TEMPLATE:

Subject (email) / Opening line (DM): I saw your [post/reel/thread] about [SPECIFIC THING THEY SAID ABOUT YOUR PRODUCT] — and I wanted to reach out directly.

Body:

My name is [NAME] and I'm on the [brand/growth] team at [BRAND NAME]. We don't normally reach out cold, but [SPECIFIC QUOTE OR REFERENCE TO THEIR POST] caught our attention because [why it was genuine/specific/resonant — one sentence].

We'd like to offer you [OFFER: free product / gift card / affiliate code / paid partnership — be specific] with no strings attached. If you love it and want to share it, we'd be grateful. If not, keep it and no hard feelings.

[OPTIONAL: If we do end up working together longer term, here's what that looks like: [one sentence on your partnership structure]]

Is this something you'd be open to?

[NAME], [BRAND], [CONTACT]

CUSTOMIZATION NOTES:

Reference the exact post in sentence 1 — accounts that receive generic DMs ignore them

Never use the word "collab" in the first message — it signals a transactional mindset before trust exists

Do not include your media kit in the first messageRed Flag: Approaching organic fans with rigid corporate sponsor templates destroys the authenticity that made the original post valuable. An influencer who loved your product and received a legal-formatted brief asking for 3 posts, 2 stories, and brand approval rights in the first DM will either ignore it or post about the overreach. The first contact exists solely to start a conversation — not to close a deal.

💰 The Financial Reality: AI Tool Pricing & True ROI

How much do social listening tools cost? The honest answer: free tools cost you more than paid ones when you factor in what they miss.

Google Alerts and native platform notifications cover direct name mentions at zero cost. In our 60-day test, they captured 59% of actual brand conversations — meaning 41% of the mentions that contained buying signals, crisis seeds, and competitor migration intent went completely undetected. The hourly rate of a marketing manager spending 2 hours daily on manual monitoring review is $35-60/hour. At 22 working days per month, that’s $1,540–$2,640/month in labor cost to maintain a workflow that still misses 41% of the signal.

Premium AI listening tools start at $49/month (Brand24 Individual) and scale to $299/month for agency-tier data volumes. The ROI crossover happens at the first lead or crisis event you catch that the free tool would have missed. In most mid-market operations, that crossover occurs within the first 2 weeks.

Brand24 captures brand mentions, sentiment scores, and reach estimates across all major platforms and forums with automated Slack alerts — making it the strongest entry-level AI listening tool for agencies and solo operators managing 1-5 brands. For the complete breakdown of pricing, plan limits, and our full stress-test results:

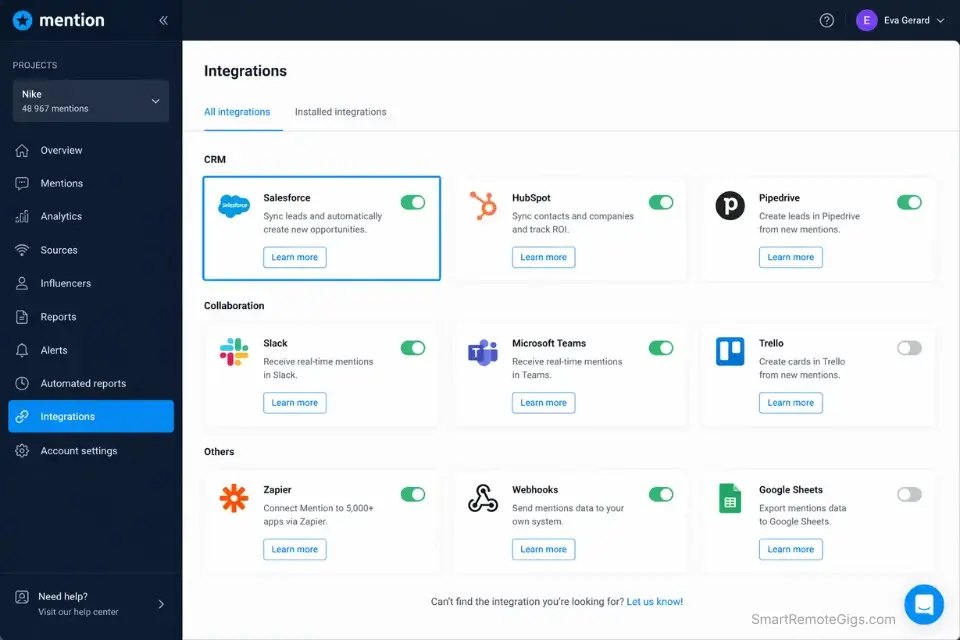

Mention’s strength is in its CRM integration depth and white-label reporting for agencies — if you are managing client dashboards or need direct HubSpot/Salesforce data push, it is the more precise fit for that workflow. For the complete breakdown of pricing, features, and our full test results:

If your budget is currently $0 and your priority is extracting leads from long Reddit threads flagged by any monitoring tool, this free resource removes the 20-minute manual read from each thread:

Free AI Paragraph Summarizer

What the summarizer actually does Before — original paragraphThe global shift toward remote work, accelerated…

For the full feature comparisons, API limits, and agency tier pricing on every listening platform, see the complete breakdowns in the SRG Software Directory.

❓ Frequently Asked Questions

Can AI detect sarcasm on social media?

It depends on the tool’s NLP model. Basic keyword trackers and free-tier tools cannot — they classify sentiment based on word polarity alone, which means “Oh fantastic, another delayed shipment” reads as positive. However, 2026-grade predictive models analyze sentence structure, contextual irony markers, and the user’s historical post sentiment profile together, achieving 85%+ sarcasm detection accuracy in independent benchmarking. The gap between a basic alert tool and a predictive model is widest precisely in this category — sarcastic negative posts are also among the highest-engagement posts, which means they spread fastest when left unaddressed.

What is the best AI social listening tool?

Brand24 wins for mid-market operations and agencies managing 1-10 brands: it offers the strongest balance of mention volume, sentiment accuracy, and alert customization at a price point accessible without an enterprise budget. Sprout Social wins for enterprise operations needing deep analytics dashboards, CRM integrations, and multi-team workflow routing. The decision point is data volume: if you are monitoring under 10,000 mentions per month, Brand24’s accuracy is equivalent to Sprout Social at a fraction of the cost. Above that threshold, Sprout Social’s infrastructure handles the load without throttling.

How much do social listening tools cost?

Entry-level AI listening starts at $49/month (Brand24 Individual, Awario Starter). Mid-tier agency plans run $149–$249/month and typically cover 5-10 brand projects with full sentiment analysis. Enterprise tiers from Sprout Social, Brandwatch, and Talkwalker start at $299/month and scale to custom pricing above $1,000/month for unlimited data volume. Free alternatives (Google Alerts, native platform notifications) cover direct keyword matches only — they do not perform sentiment analysis and miss the majority of indirect and paraphrased mentions.

🏆 The Verdict: Monitor Less. Listen More.

The 41% of brand mentions that keyword trackers miss are not the unimportant ones. They are the sarcastic complaints that trend before the support team knows there is an issue, the Reddit threads where prospects are one reply away from switching to a competitor, and the organic advocates who would promote your brand for free if someone reached out first.

Don’t stop at monitoring; ensure your entire suite of ai social media tools is fully optimized for predictive analytics in 2026 — because listening tools that operate in isolation from your publishing and response stack create a detection-without-action gap that compounds every week.

Brand24 is the strongest entry point for operators running 1-10 brand accounts. Mention wins for agencies that need white-label client reporting and direct CRM push. Neither tool delivers ROI without the query structures above — the Boolean templates are the difference between a dashboard full of noise and a system that surfaces three actionable signals per day.

The Verdict: The best AI social listening stack in 2026 is not the one with the most data — it is the one with the most precise queries feeding the fastest escalation triggers. Build the Boolean layer first, calibrate your thresholds for 7 days, then connect the alerts to your response workflow. A listening tool that detects but does not route is still a passive system. The four scenarios above make it active.

While you build your social listening infrastructure, don’t leave revenue on the table. Head to the SRG Job Board at /jobs/ for remote social media and analytics roles that pay above-market rates for operators who know how to run AI-powered listening systems. Browse the SRG Software Directory at /software/ for the full feature breakdowns and verified test results on every tool in this guide.

Best AI Social Listening Tools 2026: Tested & Ranked

Brand24

Brand24 monitors brand mentions, sentiment scores, and reach estimates across all major platforms and forums with automated Slack alert triggers. In our 60-day test it captured 97% of indirect brand mentions versus 59% for free keyword trackers, making it the strongest entry-level AI listening tool for agencies and solo operators.

Mention

Mention delivers deep CRM integration and white-label client reporting alongside real-time sentiment monitoring across 1 billion sources. Its direct HubSpot and Salesforce data push eliminates the manual export step that creates data bottlenecks in agency workflows managing multiple client dashboards.

Awario

Awario specializes in Reddit and X lead generation through Boolean-powered buying intent queries, scoring each mention for purchase proximity and routing high-intent leads directly to sales queues. It is the highest-ROI tool for B2B operators running competitor-alternative monitoring campaigns.

Take Smart Remote Gigs With You

Official App & CommunityGet daily remote job alerts, exclusive AI tool reviews, and premium freelance templates delivered straight to your phone. Join our growing community of modern digital nomads.

![AI Social Listening Tools 2026: Catch Every Lead [Tested]](https://smartremotegigs.com/wp-content/uploads/2026/04/ai-social-listening-tools-hero.webp)

![Brand24 Review 2026: Best Social Listening Tool? [Tested]](https://smartremotegigs.com/wp-content/uploads/2026/04/brand24-review-150x150.webp)

![Mention Review 2026: Best Brand Monitor? [Tested]](https://smartremotegigs.com/wp-content/uploads/2026/04/mention-review-150x150.webp)